Startups need AI Tokens more than Cloud Credits

For a long time, founders would come out of accelerators with generous packages from Amazon Web Services or Google Cloud Platform, and that support meaningfully extended their runway. Infrastructure was expensive, and anything that reduced that burden gave you more time to figure things out.

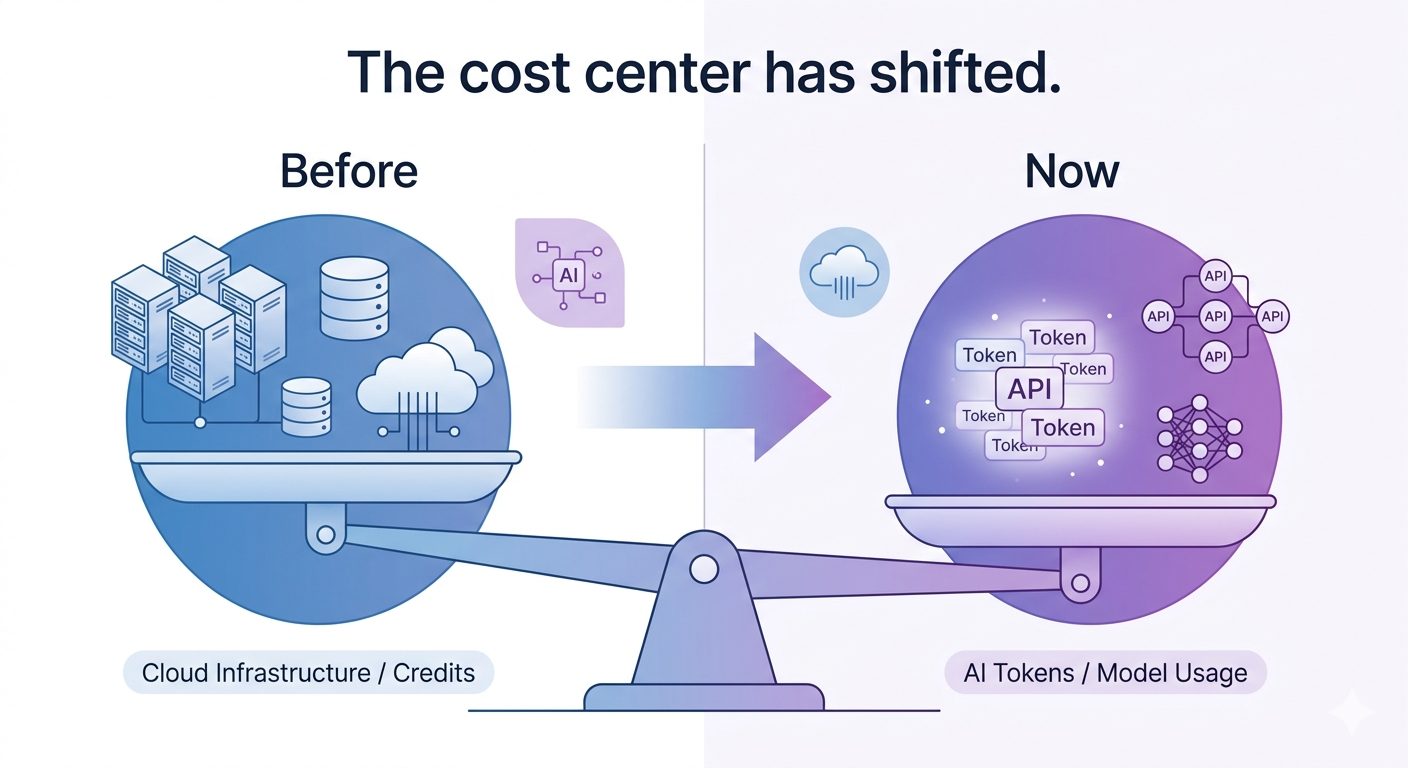

That logic still holds, but the center of gravity has shifted.

More and more early-stage companies are building products where the primary cost is not infrastructure in the traditional sense. It is model usage. Every meaningful interaction with your product might call an API, generate tokens, or run inference on a system you do not control. The more successful your product becomes, the more that cost scales with usage.

In that world, cloud credits are still useful, but they are no longer the thing that determines how far you can go. Access to AI models, and the ability to use them affordably, has taken that role.

What I see many founders miss is that this is not just a cost issue. It is a velocity issue. If you are constantly worried about how much each interaction costs, you hesitate. You limit experimentation. You avoid building features that could be transformative because you are not sure you can afford to run them at scale. That constraint shapes the product in ways that are hard to unwind later.

At the same time, there is a quiet shift happening in how these resources are made available. The major platforms have figured out that if they can get startups building on their models early, they become long-term customers. So the programs are evolving.

Through services like Vertex AI on Google Cloud Platform, founders can apply cloud credits directly toward models like Gemini. On the Amazon Web Services side, Bedrock provides access to partners like Anthropic, which means those same infrastructure credits can effectively become model usage. There are also more targeted programs, like the AWS Generative AI Accelerator, that are explicitly designed to support AI-native startups with significantly larger credit packages.

None of this is hidden, but it is also not always obvious unless you are looking for it.

This is where the role of accelerators and incubators starts to change. Historically, part of their value was aggregating perks that reduced your burn. That still matters, but the definition of a useful perk is different now. If a program cannot help you access meaningful AI usage, or at least show you how to convert what you are given into that usage, it is missing a big part of what founders actually need.

For founders, this means being much more deliberate about what you ask for and how you structure your stack. Not all credits are equal. A large pool of generic cloud credits is helpful, but a smaller pool that can be directly applied to the models you rely on may be far more valuable in practice. It is worth spending time understanding how each platform allocates credits, what services they apply to, and how that maps to your product.

It also means thinking carefully about where you commit early. If you build tightly around a particular provider’s ecosystem, you may gain efficiency and better access to credits, but you also create dependencies that can be harder to unwind. There is no single right answer here, but it is a strategic decision, not just a technical one.

The broader shift is straightforward. We have moved from a world where compute was the primary constraint to one where access to intelligence is the constraint. Founders who recognize that early, and who find ways to secure and use that access efficiently, will be able to build faster, iterate more freely, and push further before they need to raise again.

In previous cycles, the question was how cheaply you could run your infrastructure. Increasingly, the question is how affordably you can think at scale.