The AI Stack Every Enterprise Developer Needs in 2026

The essential services powering the next generation of enterprise software

Building enterprise software in 2026 means navigating one of the most complex and fast-moving vendor ecosystems in the history of technology. Beneath every AI-powered enterprise application sits a layered stack of specialized services — and understanding which ones matter, and why, is now a core competency for any serious engineering team.

Start with the Foundation: Large Language Models

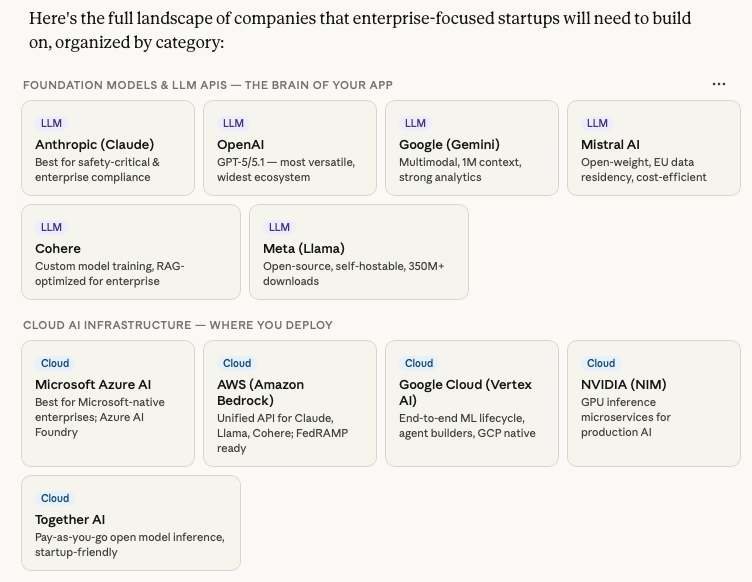

At the core of every AI enterprise app is a foundation model. Anthropic's Claude, OpenAI's GPT series, Google's Gemini, and Meta's open-source Llama each serve different priorities. Claude is the go-to for safety-critical, customer-facing applications where guardrails and compliance matter most. Gemini's million-token context window makes it powerful for document-heavy workflows. Llama, being open-source and self-hostable, gives cost-sensitive teams control over their data and infrastructure.

For regulated industries — finance, healthcare, legal — deploying through cloud AI platforms like AWS Bedrock or Azure AI Foundry is often essential. These platforms bundle pre-certified compliance frameworks (SOC 2, HIPAA, FedRAMP) that would otherwise take teams months to build independently.

The Orchestration Layer: How Your App Thinks

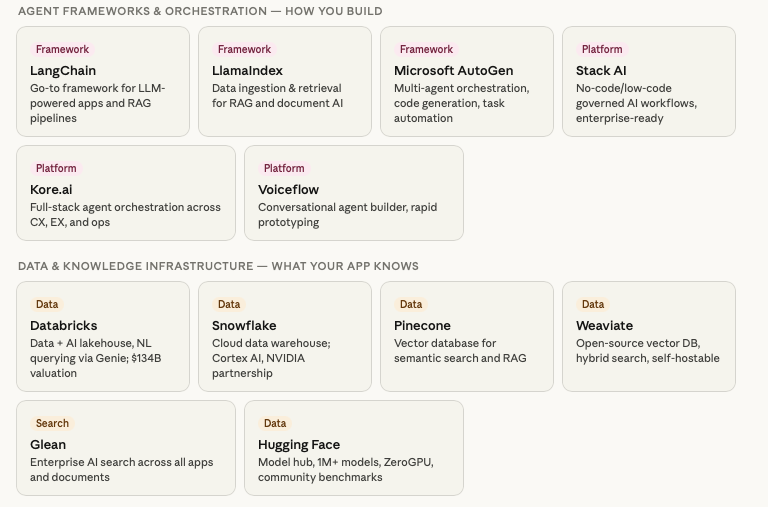

Raw LLM APIs are just the beginning. Enterprise apps require multi-step reasoning, memory, and tool use — and that's where agent frameworks come in. LangChain has become the dominant framework for building LLM-powered pipelines, while LlamaIndex specializes in connecting AI models to structured and unstructured data through retrieval-augmented generation (RAG). Microsoft's AutoGen enables multi-agent workflows where specialized AI agents collaborate on complex tasks.

Vector databases like Pinecone and Weaviate are the memory layer of this stack, enabling semantic search and long-term knowledge retrieval at scale. Combined with data platforms like Databricks and Snowflake, they let enterprise apps query vast internal knowledge bases in natural language.

Voice, Security, and the Invisible Infrastructure

As voice AI moves from novelty to necessity, platforms like Deepgram, Vapi, and LiveKit provide the low-latency transcription, synthesis, and telephony infrastructure needed to build real-time conversational agents for sales, support, and operations.

Security, however, is the layer most startups underinvest in. Tools like Lakera Guard protect against prompt injection and data leakage in real time. Noma Security monitors AI agent behavior at runtime. As enterprises deploy AI into sensitive workflows, these tools are increasingly required before a procurement team will sign off on any new vendor.

Observability: Building What You Can Measure

Production AI apps behave differently than traditional software. LangSmith and Weights & Biases provide the tracing, evaluation, and monitoring infrastructure developers need to understand why an AI responded the way it did, catch regressions, and improve model performance continuously.

Data platforms determine how smart your app is.

Databricks is doubling down on Lakebase for operational databases built for AI agents, and investing in Genie to let every employee chat with their data for accurate and actionable insights. Y Combinator Paired with Pinecone or Weaviate for vector search, this forms the knowledge backbone of most enterprise AI apps.

The most successful enterprise AI startups treat their stack not just as a set of tools, but as a competitive moat. The teams that invest early in the right combination of models, orchestration, data infrastructure, and security are the ones that win enterprise contracts — and keep them.

Related Articles

Startups Need AI Tokens More Than Cloud Credits The economics of building on AI have shifted — model usage now drives cost more than infrastructure. A must-read for teams choosing where to allocate resources in their stack. Santa Cruz Works · Mar 30, 2026

AI Isn't Killing SaaS. It's Rewriting the Budget. Enterprise AI spending is reshaping how buyers allocate software budgets. Understanding this shift is critical for any team selling into or building within the enterprise. Santa Cruz Works · Mar 30, 2026

Cora: Unlocking "Invisible" Revenue A look at how one startup is using AI to turn behavioral signals into revenue — a real-world example of the enterprise AI stack in action. Santa Cruz Works · Mar 25, 2026

Best Founders Apply Early For engineering teams thinking about the long game — why early, deliberate decisions compound into outsized advantages. Mirrors the article's closing argument about stack as competitive moat. Santa Cruz Works · Mar 30, 2026